Scientific Achievement

Researchers at the BELLA Center in the Accelerator Technology & Applied Physics Division and the Engineering Division of the U.S. Department of Energy (DOE)’s Lawrence Berkeley National Laboratory (Berkeley Lab) have used artificial intelligence and machine learning to accurately predict the position of a high-power laser before it strikes a target, achieving micron-scale precision.

This advancement provides a foundation for improving the stabilization and control of high-power, high-intensity lasers used in next-generation particle accelerators called laser-plasma accelerators (LPAs). It also strengthens the use of these test facilities to develop methods as part of the DOE’s AI Genesis Mission that will enhance all accelerators.

Significance and Impact

The significance of this work extends far beyond a single application, such as laser pointing in low-repetition-rate LPAs. For example, in many applications within the DOE accelerator complex, it is important that LPA parameters—such as electron beam bunch, laser pulse, or muon beam—remain stable and controlled at specific times (such as during collision, at the moment of injection into a secondary structure, or in a pump-probe event).

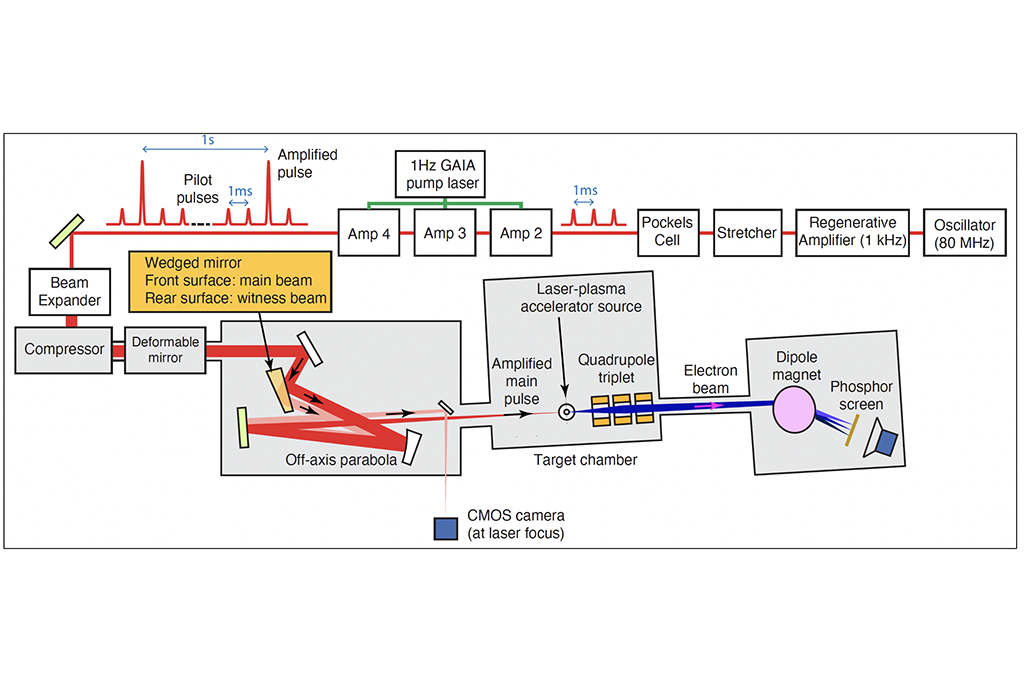

This research establishes the groundwork for a broad range of predictive stabilization and active correction applications. The key to achieving this broad capability was using the BELLA laser as a versatile test bed in a collaboration among researchers from BELLA, ATAP’s Berkeley Accelerator and Controls Instrumentation Program, and Berkeley Lab’s Engineering Division. The flexibility of this test facility enabled rapid development, a factor that remains important in other projects, such as the Genesis Mission.

Research Details

Time series forecasting using machine learning

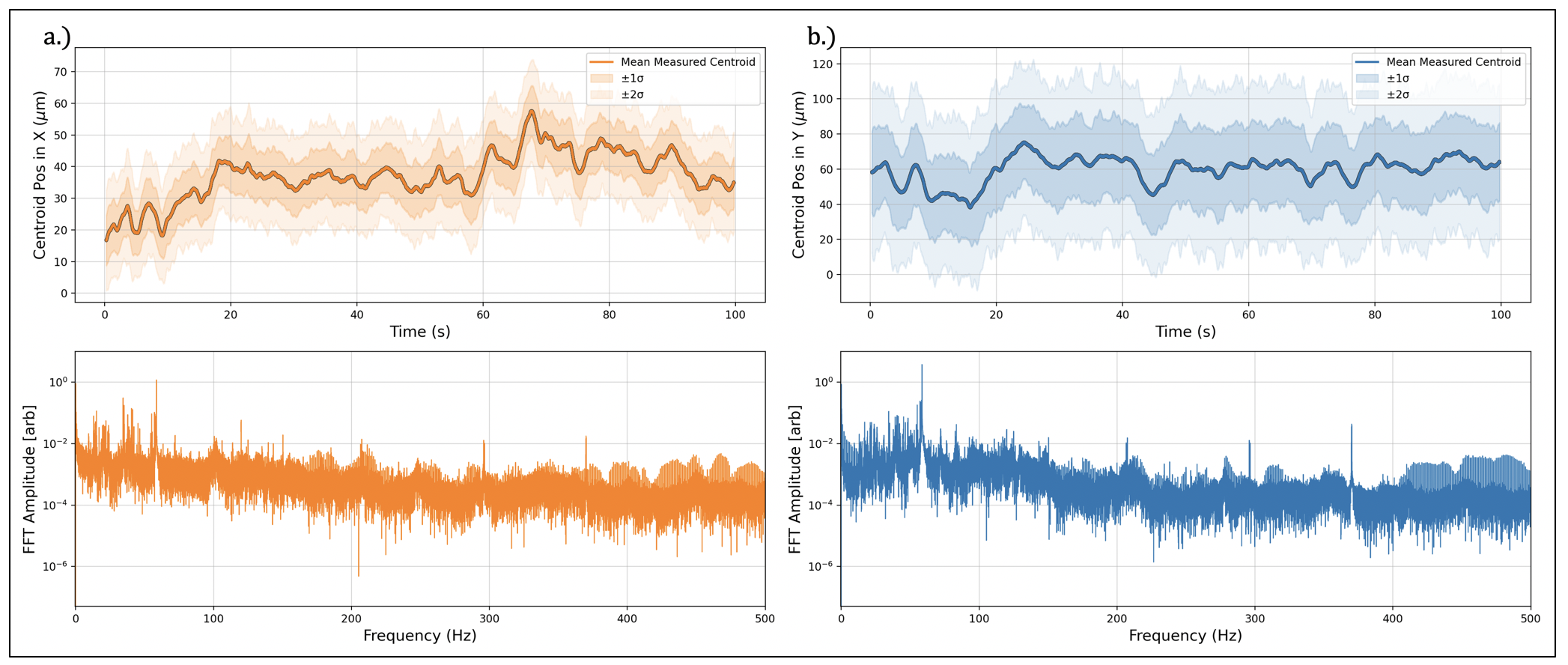

One-hundred seconds of centroid data for horizontal (𝑥) and vertical (𝑦) were taken at the final laser focus of the 100 TW HTU beamline at LBNL’s BELLA Center. Subplots (a) and (b) show a running-average centroid taken during this 100 s span along with ±1,2 𝜎 for 𝑥&𝑦, respectively. The moving average and standard deviation are taken over a window length of 500 ms. The corresponding FFT for each time series trace is shown and is taken along the entire 100 s window. The spectral signatures are deterministic with notable frequency content between 10 and 100 Hz — well beyond the bandwidth of conventional commercially available feedback PID-based stabilization systems that are integrated with larger (multi-inch) optics.

Laboratory environments are noisy. Mechanical vibrations from generators, atmospheric thermal fluctuations, and other disturbances create a complex, nonlinear spectral signature that can be challenging to eliminate. These disturbances influence the trajectory of pulse-based lasers used in LPAs. Using a high-repetition-rate (>1kHz) laser, the researchers precisely measured the beam position. Although the disturbance spectrum may vary over time, it remains predictable—allowing a series of measurements to accurately forecast beam positions well into the future. Predicting these future positions could significantly enhance methods for stabilizing, controlling, and understanding LPA-based lasers and the particle beams they produce.

Using machine learning to predict laser focus position

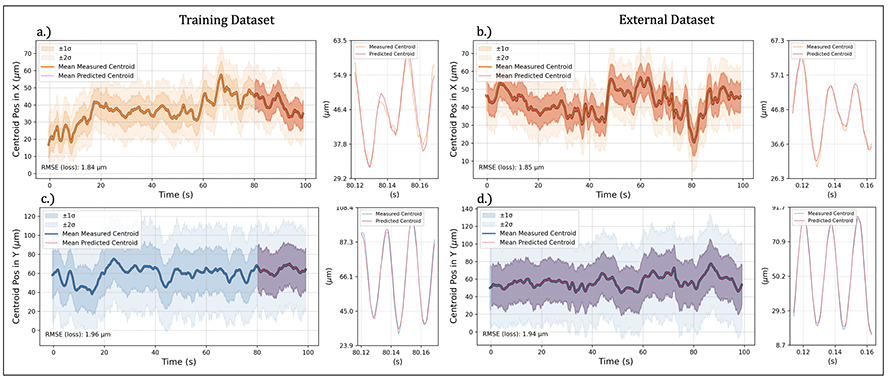

The training and validation results for the 1D convolution neural networks are presented. Subplots (a) and (c) show the time series of centroid positions in 𝑥 and 𝑦 directions, respectively. In order to facilitate visual representation across the entire dataset, a running average taken over 500 ms windows is used in the larger figure windows. The shaded regions in these figures represent one and two standard deviations (±1𝜎, ±2𝜎) from the mean. In addition, the model predictions for the cross-validation sets are overlaid in crimson, while the training portions remain in their original color. Model performance was evaluated using both the cross-validation sets and an independent external dataset collected 24 h after the training data acquisition. Subplots (b) and (d) present the model predictions and their corresponding performance metrics for the external datasets. These results are visualized using the same running average technique applied in subplots (a) and (c). Notably, the models achieved consistent performance with RMSE, calculated using the original unsmoothed centroid measurements, < 2 μm for a 1∕𝑒2 beam radius of 34 μm across all validation tests, i.e., both the cross-validation and external datasets. The RMSE or loss function values are shown at the bottom left of each figure and coincide with the data range/curve overlaid in crimson. Next to each figure is an inset plot of individual centroid measurements (no running average) spanning the range of 50 ms to help illustrate model performance at higher frequencies.

The team measured the laser beam position at the focus of a 3-meter off-axis parabola 500 times in quick succession. This set of 500 position measurements was used as input for a neural network. The network learned to use this series of time-dependent data points to predict the position 11 time steps ahead. The results were remarkably accurate, with predictions correct to within 5% of the total beam size. For example, if the beam drifted by just 2 microns (a small fraction of its width), the neural network could still successfully predict that movement.

Contact: Curtis Ervin Berger

Researchers: Curtis Ervin Berger, Sam Barber, Fumika Isono, Kyle Jensen, Joseph Natal, Anthony Gonsalves, and Jeroen van Tilborg.

Funding: The DOE Office of Science, Office of High Energy Physics supported the work presented here.

Publication: Curtis Berger, Anthony Gonsalves, Kyle Jensen, Jeroen van Tilborg, Dan Wang, Alessio Amodio, and Sam Barber. “Artificial intelligence time series forecasting for feed-forward laser stabilization,” Nuclear Inst. and Methods in Physics Research, A 1078, 170561 (2025). https://doi.org/10.1016/j.nima.2025.170561

For more information on ATAP News articles, contact caw@lbl.gov.